Anders’ PhD defence presentation can be seen in the video below.

PhD defense of Anders Krogh Mortensen for the thesis “Estimation of Above-Ground Biomass and Nitrogen-Content of Agricultural Field Crops using Computer Vision”.

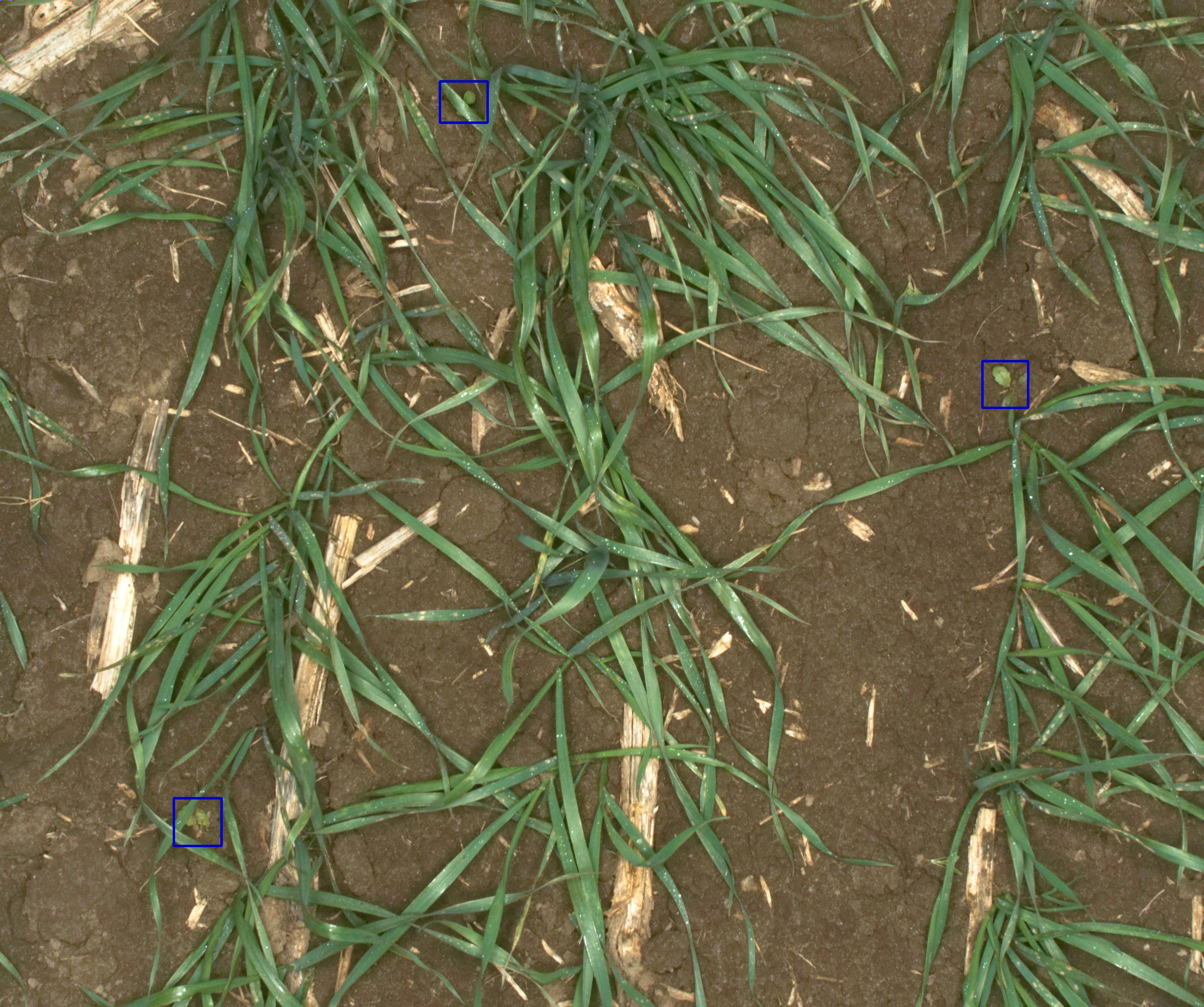

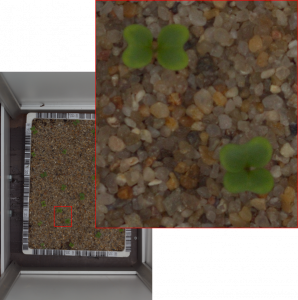

The thesis investigates yield estimation derived from RGB images and coloured 3D point clouds. Image segmentation methods based on image processing, handcrafted feature extraction, and deep learning is investigated. Furthermore, a novel method for segmenting lettuce in 3D coloured point clouds is proposed. Several yield models based on the segmented crops are investigated.

The research findings have shown that recent advances in deep learning can be transferred to segmentation of (mixed) crops. It was further shown, that (simple) growth models can be improved using crop coverage to explain the local variations in the crop.

PhD student:

Anders Krogh Mortensen: http://pure.au.dk/portal/en/anmo@agro…

PhD Supervisors:

Associate Professor: René Gislum: http://pure.au.dk/portal/en/rg@agro.a…

Professor Henrik Karstoft: http://pure.au.dk/portal/en/hka@eng.a…

This PhD project is part of the VIRKN project supported by a grant from the Green Development and Demonstration Program (GUDP) granted by the Danish Ministry of Environment and Food and the Future Cropping project supported by a grant from Innovation Fund Denmark.

– VIRKN: http://mst.dk/erhverv/groen-virksomhe…

– Future Cropping: https://futurecropping.dk/

– GUDP: http://mst.dk/erhverv/groen-virksomhe…

– Innovation Fund: https://innovationsfonden.dk